NVIDIA has officially revealed the latest version of the AI-based painting algorithm that was going wild a couple of years back then and now, the GauGAN2 is better than ever.

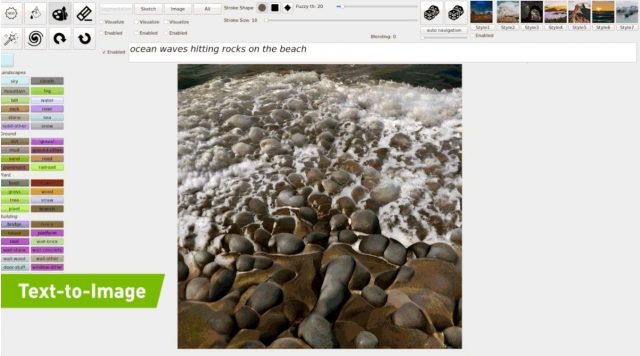

Powered by the superior deep learning model, the new GauGAN2 is now more masterful in its job of turning descriptive sentences and keywords into photorealistic images that can help those with janky neural motor skills in terms of drawing (Like me) to no longer be bounded and channel the power of imagination without breaking a sweat. In layman’s terms, the model works by setting up a template through analyzing the phrase and painting what it knows and users can add in extra details such as “rocky beach” or “rainy day” to enhance or overhaul the entire scene, all performed in real-time.

If you’re one of the geeky ones that know a bit or two about deep learning, the GauGAN2 combines segmentation mapping, inpainting, and text-to-image generation all into a single GAN model – one of the very first in the world that are capable of delivering outputs of this quality. Trained through 10 million high-quality landscape images as input using the NVIDIA Selene supercomputer under the DGX SuperPod family, researchers have come out with a neural network that learns the connection between the descriptive meaning and the associated visual such as “winter”, “foggy”, “rainbow” and more.

Check out the full video below to see GauGAN2’s impressive capabilities yourself or head over to the site to try it out yourself.