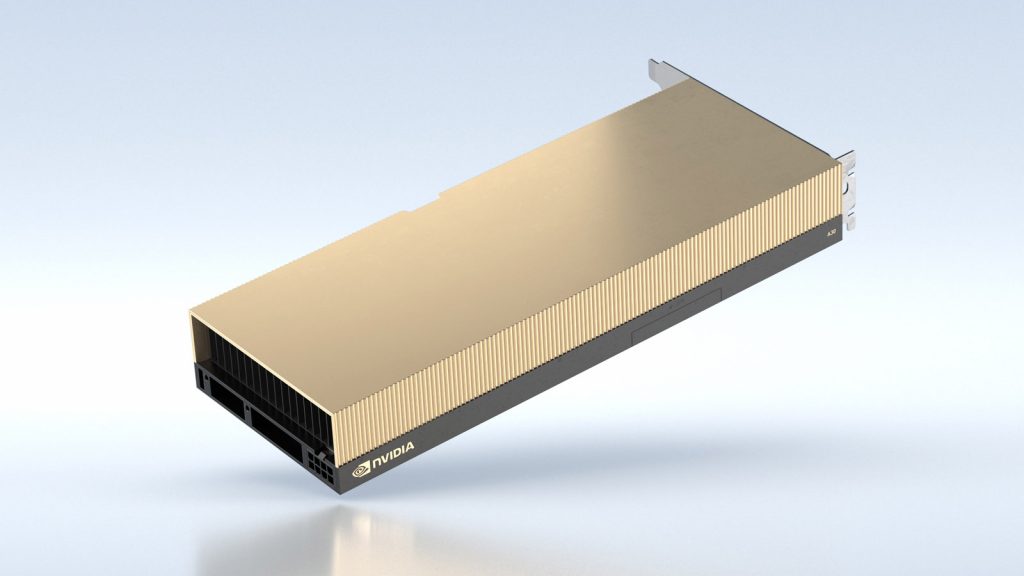

NVIDIA has announced 2 new server-class GPUs – the A30 and A10 where both are designed and dedicated to AI computing.

The A30 at 165W of operating power excels at delivering ample performance for industry-standard servers towards workloads in terms of AI inference and mainstream computing like recommender systems, computer vision, and conversational AI while the A10 is more into accelerating deep learning, CAD works, cloud gaming, and interactive rendering which in general, requires both AI and graphical inputs to work together in a common infrastructure. Deployed through NVIDIA’s native Virtual GPU software, provisioning and managing GPU power for different use cases and professions is now easier than ever.

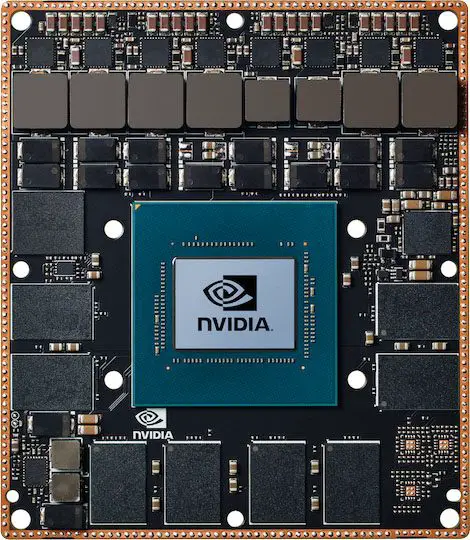

On the other hand, the NVIDIA Jetson which is the company’s own offering towards AI computing utilizes the NVIDIA Xavier System-on-Module (SOM) that matches most AI-optimized servers through the foundation built upon the brand’s unified architecture and CUDA-X software stack. In short, the small and compact package is able to do what others can do at just shy of 30W, albeit the scale of computing is far smaller in this case.

As a side note, the A30 and A10 debuted as part of their highlight results on MLPerf, an industry-standard for measuring AI performance across a multitude of simulated workloads towards a variety of different workloads and of course NVIDIA being the supreme leader in hardware and software related to AI, managed to submit every test results for all sets of tests for an all-rounder top-tier performance numbers.

Availability

The NVIDIA A30 and A10 are expected to hit major cloud service providers such as Cisco, Dell Technologies, Lenovo, Inspur, and HP Enterprise this summer including those that are NVIDIA-Certified while the Jetson AGX Xavier /Jetson Xavier SoM can be sought after through distributors globally.