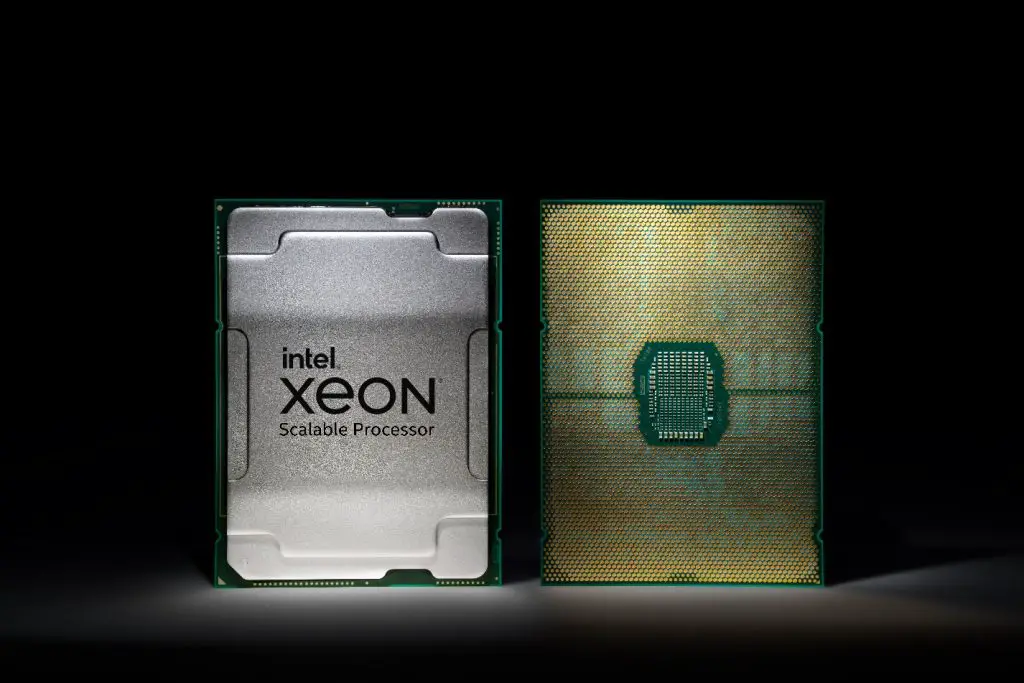

Today, Intel has released the latest generation of its server-exclusive processors, the 3rd generation Xeon Scalable CPU.

Coded-named as ‘Ice Lake’, the 10nm manufacturing process leverages extra performance from packing additional transistors in a total of up to 40 cores per processor unit and delivering up to 2.65 times higher average performance compared to hardware around 5 years ago. The maximum supported memory capacity is 6TB DDR4-3200 per socket in 8 channel mode with 64 lanes of PCIe 4.0 lanes. All in all, an average of 46% improvement against prior generation has been reported.

While Intel has lost quite a bit of spotlight due to its competitor AMD leading the charge, the brand’s dedicated solutions towards cloud, AI, enterprise, HPC, networking, security and edge applications has always been an attraction for many of its client as it is ready to use and fully deployable within Intel’s latest hardware offerings. Additionally, the 3rd gen Xeon Scalable CPU is the only data center processor with built-in AI acceleration that sees performance improvement across any workload that is either assisted by AI or targeting AI such as machine learning with results pitting against the AMD EPYC 7763 showing a 74% lead for Team Blue.

Security has also been the pain point for Intel as many side-channel exploits has been discovered by researchers that allows access to sensitive data. However, the brand promises stronger protection for the 3rd Gen Intel Xeon Scalable processor through the implementation of Intel SGX, Intel total Memory Encryption and Intel Platform Firmware Resilience that can be found all 2-socket CPU models of this generation. Cryptographic algorithms for anonymizing data is also Intel’s focus with the Crypto Acceleration feature leveraging the tasks without impacting the system responsiveness that ultimately results in latency issues.

According to Intel, the 3rd Gen Intel Xeon Scalable processor also works in developer’s favor through oneAPI – a open, cross architecture programming language that is able to utilize the CPU’s performance in AI and encryption to the fullest with access to advanced compilers, libraries, and analysis and debug tools made available to them. That aside, they are also confident that cloud service providers are ready to deploy these chips to support the growing demands of cloud computing while network environments will be able to to take advantage of it to shorten deployment timeframes for vRAN, NFVI, virtual CDN and more.

As a side note, given that these chips are manufactured locally in Kulim, sounds like local enterprises can get a hold of these chips without running into too much trouble. That’s good.